Small and mid-sized enterprises deploying artificial intelligence face a rapidly evolving regulatory landscape in 2026. New laws across the EU and US states demand transparency, bias audits, and risk management regardless of company size. Navigating these requirements can feel overwhelming, especially with limited compliance budgets and expertise. This guide provides a structured roadmap to help you inventory AI systems, assess risks, implement proportional controls, and maintain ongoing governance. You'll learn practical steps to comply with the EU AI Act, US state regulations, and NIST frameworks while leveraging AI to compete effectively. By following this approach, your SME can harness AI's benefits while meeting legal obligations and building customer trust.

Table of Contents

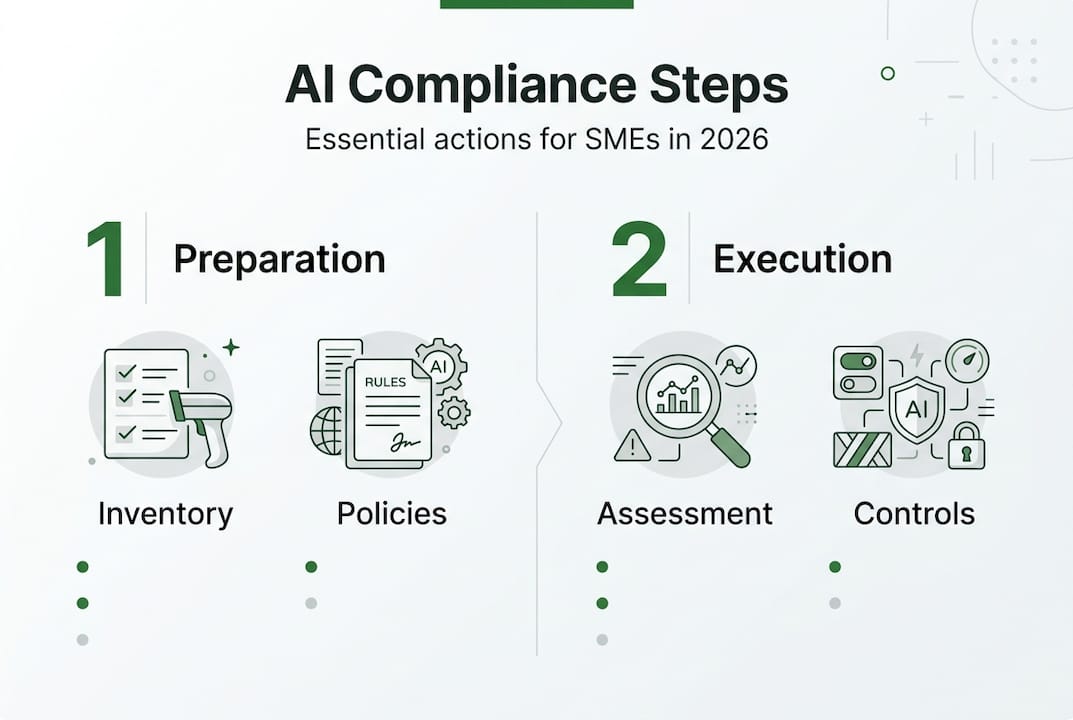

- Preparing Your SME For AI Compliance In 2026

- Executing AI Compliance: Risk Assessment And Phased Governance

- Verifying AI Compliance And Managing Ongoing Governance

- Explore AI Compliance Solutions With NOBISYS

- FAQ

Key takeaways

| Point | Details |

|---|---|

| Inventory and assess | SMEs must document all AI systems in use and evaluate risks under evolving regulations to establish a compliance baseline. |

| Tiered risk approach | The EU AI Act categorizes AI by risk level, offering simplified requirements for smaller businesses deploying low-risk systems. |

| State-level mandates | US states like California and New York require bias audits and transparency disclosures for high-risk AI uses regardless of company size. |

| Phased implementation | Breaking compliance into stages reduces upfront costs and complexity, allowing SMEs to prioritize highest-risk applications first. |

| Human oversight critical | Maintaining human-in-the-loop processes and robust documentation prevents edge case failures and ensures regulatory accountability. |

Preparing your SME for AI compliance in 2026

Before diving into detailed risk assessments and controls, you need a clear picture of your AI footprint and the regulations that apply to your business. Start by creating a comprehensive inventory of every AI system you use, from customer service chatbots to predictive analytics tools. Document each system's purpose, data sources, decision-making role, and vendor information. This inventory becomes your compliance foundation and helps you spot high-risk applications that demand immediate attention.

Next, develop clear AI use policies aligned with your business goals and regulatory requirements. Your policies should define acceptable AI applications, prohibited uses, data handling standards, and approval workflows for new AI deployments. SMEs must conduct AI inventories, create use policies, perform risk assessments, ensure human oversight, and maintain documentation to build a defensible compliance program. These policies give your team guardrails and demonstrate to regulators that you take AI governance seriously.

Understanding which regulations apply to your business is essential. The EU AI Act uses a risk-based framework that categorizes AI systems as prohibited, high-risk, limited-risk, or minimal-risk. If you serve EU customers or use AI systems that could impact EU individuals, you fall under this regulation regardless of where your business is located. US state laws in California, New York, and Colorado impose specific requirements for AI used in hiring, housing, and credit decisions. The NIST AI Risk Management Framework provides voluntary guidance that many businesses adopt as a compliance baseline. Familiarize yourself with these frameworks to determine your obligations.

Perform an initial risk assessment focusing on AI applications that make or significantly influence decisions about people. Consider whether your systems could produce discriminatory outcomes, safety hazards, privacy violations, or misleading information. High-risk categories typically include employment screening, loan approvals, medical diagnostics, and law enforcement applications. Even if you're using vendor-provided AI tools, you remain responsible for compliance in most jurisdictions.

Set up governance structures that emphasize human oversight at critical decision points. Designate a compliance officer or team responsible for AI governance, even if this role is part-time initially. Establish review processes for AI outputs before they affect customers or employees. Create escalation paths for edge cases and unexpected system behavior. AICoreSpecs offers frameworks to help SMEs structure these governance processes efficiently. Your governance structure should include regular audits, incident response protocols, and continuous monitoring to catch issues early.

Key preparation steps include:

- Complete AI system inventory with vendor details and use cases

- Draft AI use policies covering data handling and approval workflows

- Identify applicable regulations based on geography and AI applications

- Conduct preliminary risk assessment focusing on high-impact systems

- Assign governance roles and establish human oversight checkpoints

Executing AI compliance: risk assessment and phased governance

Once you've completed your preparation, it's time to execute detailed risk assessments and implement proportional controls. Start by classifying each AI system according to regulatory risk tiers. The EU AI Act applies with a risk-based approach: prohibited, high-risk, limited, minimal categories that determine your compliance obligations. Prohibited systems include social scoring and real-time biometric identification in public spaces. High-risk systems require conformity assessments, technical documentation, and ongoing monitoring. Limited-risk systems need transparency disclosures, while minimal-risk systems face few requirements.

Conduct detailed risk assessments for each high-risk system, examining potential for bias, safety failures, privacy breaches, and transparency gaps. Test your AI models with diverse datasets to identify discriminatory patterns across protected characteristics like race, gender, and age. Evaluate whether your systems could cause physical harm, financial loss, or reputational damage if they malfunction. Assess data quality, model accuracy, and the robustness of your training processes. Document every finding and the remediation steps you plan to take.

Implement controls proportionate to each system's risk level. High-risk systems demand rigorous testing protocols, human review of critical decisions, audit trails, and regular performance monitoring. You might need third-party conformity assessments for certain EU AI Act categories. Limited-risk systems require clear disclosures that users are interacting with AI, but fewer technical controls. Minimal-risk systems can operate with basic documentation and periodic reviews. Tailor your compliance investments to match actual risk rather than applying blanket controls across all systems.

Phased compliance reduces upfront costs and complexity, making regulatory requirements manageable for resource-constrained SMEs. Begin with your highest-risk systems and those facing the nearest compliance deadlines. The EU AI Act phases in requirements over 6 to 36 months depending on risk category, giving you time to prioritize. Focus first on systems that directly impact individuals' rights or safety. Once you've addressed critical risks, expand to medium and low-risk applications. This approach spreads costs over time and lets you learn from early implementations.

Keep thorough documentation for audits and regulatory reporting. Maintain records of your risk assessments, testing results, human oversight decisions, incident reports, and system changes. Document the datasets used to train models, including their sources and any preprocessing steps. Create audit trails showing who approved AI deployments and what review processes were followed. State-level AI regulations increasingly demand this documentation as proof of compliance, and regulators can request it during investigations.

Pro Tip: Create a compliance calendar tracking deadlines for each regulation affecting your business, then work backward to schedule risk assessments, testing, and documentation tasks with buffer time for unexpected issues.

Here's a phased compliance roadmap:

- Months 1-3: Complete risk classification and prioritize high-risk systems for immediate action

- Months 4-6: Conduct detailed risk assessments and implement controls for top-priority systems

- Months 7-9: Expand controls to medium-risk systems and begin regular monitoring

- Months 10-12: Address remaining systems and establish ongoing governance processes

- Ongoing: Maintain documentation, conduct periodic audits, and update controls as regulations evolve

| Risk Category | Key Requirements | Typical SME Examples |

|---|---|---|

| Prohibited | Cannot deploy under any circumstances | Social scoring systems, real-time public biometric surveillance |

| High-risk | Conformity assessment, documentation, monitoring | Hiring algorithms, credit scoring, medical diagnostics |

| Limited-risk | Transparency disclosures only | Customer service chatbots, content recommendation engines |

| Minimal-risk | Basic documentation and periodic review | Spam filters, inventory optimization tools |

Verifying AI compliance and managing ongoing governance

Verifying that your compliance efforts actually work requires regular audits, continuous monitoring, and adaptive controls. Conduct bias and performance audits on your AI systems at least annually, or more frequently for high-risk applications. Test models against diverse demographic groups to detect discriminatory patterns that might emerge as data distributions shift. Measure accuracy, precision, recall, and fairness metrics relevant to each system's purpose. Compare current performance against baseline metrics to spot degradation over time.

Implement human oversight for critical decisions to catch edge cases and unexpected system behavior. Edge cases cause 80% of AI failures, making human-in-the-loop processes essential for maintaining trust and compliance. Train your team to recognize when AI outputs seem questionable and establish clear escalation procedures. For high-stakes decisions like loan denials or employment terminations, require human review before finalizing outcomes. Document these reviews to demonstrate regulatory compliance and build an audit trail.

Use synthetic data and monitoring tools to detect emerging risks before they cause harm. Synthetic datasets let you test AI systems against rare scenarios and edge cases without exposing real customer data. Monitoring tools track model performance in production, alerting you to accuracy drops, bias drift, or unusual prediction patterns. Set thresholds that trigger automatic alerts when key metrics fall outside acceptable ranges. This proactive approach helps you address issues before customers or regulators notice them.

Compare automated controls versus human-verified processes to optimize your governance approach. Automated bias detection tools can scan large datasets quickly but may miss context-specific issues. Human reviewers bring judgment and domain expertise but can't scale to review every decision. The optimal mix depends on your risk level, decision volume, and available resources. High-risk systems benefit from layered controls combining automated monitoring with periodic human audits.

Maintain documentation and update compliance processes as laws evolve. Regulations rarely stand still, and new requirements emerge as governments learn from early AI deployments. Subscribe to regulatory updates from relevant jurisdictions and industry associations. Review your compliance program quarterly to incorporate new guidance and address gaps identified through audits. Budget AI compliance resources as an ongoing operational expense rather than a one-time project, because governance is continuous work.

Pro Tip: Create a compliance dashboard tracking key metrics like audit completion rates, human review percentages, incident counts, and regulatory deadline status so you can spot problems at a glance and report progress to leadership.

Ongoing governance activities:

- Schedule quarterly bias audits for high-risk systems and annual reviews for others

- Monitor AI performance metrics continuously with automated alerting for anomalies

- Conduct monthly team training on AI governance policies and regulatory updates

- Review and update documentation whenever systems change or new regulations emerge

- Perform annual third-party audits to validate your compliance program's effectiveness

| Control Type | Strengths | Limitations | Best Use Cases |

|---|---|---|---|

| Automated monitoring | Scales to high volumes, catches statistical anomalies quickly | May miss context-specific issues, requires careful threshold tuning | Continuous performance tracking, bias drift detection |

| Human review | Brings judgment and domain expertise, catches edge cases | Doesn't scale to every decision, subject to reviewer bias | High-stakes decisions, escalated cases, periodic audits |

| Hybrid approach | Combines speed with judgment, layered defense | More complex to implement and coordinate | High-risk systems requiring both scale and accuracy |

Explore AI compliance solutions with NOBISYS

Navigating AI compliance doesn't have to overwhelm your team or drain your budget. NOBISYS offers specialized compliance tools and governance platforms designed specifically for small and mid-sized enterprises facing regulatory requirements in 2026. Our solutions support every stage of compliance, from risk assessment and documentation to ongoing monitoring and human oversight integration. The AICoreSpecs compliance framework provides templates, checklists, and automated workflows that streamline multilayered regulations across EU and US jurisdictions.

Our AIPrototype governance platform helps you implement proportional controls matched to each system's risk level, reducing unnecessary costs while ensuring regulatory accountability. Scalable options let you start with critical systems and expand coverage as your compliance program matures. Explore how NOBISYS AI compliance solutions can transform regulatory obligations into competitive advantages by building customer trust and enabling responsible AI innovation.

FAQ

How do I know if my SME is subject to the EU AI Act?

Applicability depends on whether your AI systems or services impact individuals in the EU, regardless of where your business is located. SMEs with fewer than 250 employees and under €50M turnover deploying AI affecting EU users fall under the Act but benefit from simplified compliance requirements for certain risk categories. If you serve EU customers, use EU-based cloud providers, or deploy AI systems that could affect EU residents, you should assume the regulation applies and conduct a detailed applicability assessment.

What are the typical costs of AI compliance for SMEs?

Compliance budgets vary significantly based on jurisdiction, risk level, and the number of AI systems you operate. Initial setup costs range from $15,000 to $75,000, with annual ongoing expenses between $5,000 and $20,000 for most SMEs. EU high-risk systems requiring conformity assessments and extensive documentation push costs toward the higher end. Phased approaches that prioritize critical systems first help manage budget impact by spreading investments over multiple quarters rather than demanding large upfront expenditures.

How can SMEs manage compliance risks with limited resources?

Focus on highest-risk systems first and adopt a phased implementation approach that matches your budget and timeline. Utilize technology platforms to automate documentation, monitoring, and reporting tasks that would otherwise require dedicated staff. German SMEs score high on GDPR familiarity but lower on AI Act readiness due to resource constraints, showing that leveraging existing compliance knowledge and incremental investments works better than attempting comprehensive programs immediately. Consider budgeting AI compliance as ongoing operational expenses rather than capital projects to smooth cash flow impact.

What US state regulations should SMEs be aware of for AI?

California, New York, and Colorado have enacted laws targeting AI use in employment, housing, credit, and other high-risk applications. These regulations require bias audits and transparency disclosures for covered AI systems regardless of company size, meaning SMEs face the same obligations as large enterprises. New York City's hiring algorithm law demands annual bias audits and candidate notifications, while California's laws address automated decision-making across multiple sectors. Track state-level AI regulations in jurisdictions where you operate or serve customers, as requirements continue expanding rapidly.